Stein's unbiased risk estimate

In statistics, Stein's unbiased risk estimate (SURE) is an unbiased estimator of the mean-squared error of "a nearly arbitrary, nonlinear biased estimator."[1] In other words, it provides an indication of the accuracy of a given estimator. This is important since the true mean-squared error of an estimator is a function of the unknown parameter to be estimated, and thus cannot be determined exactly.

The technique is named after its discoverer, Charles Stein.[2]

Formal statement

Let  be an unknown parameter and let

be an unknown parameter and let  be a measurement vector which is distributed normally with mean

be a measurement vector which is distributed normally with mean  and covariance

and covariance  . Suppose

. Suppose  is an estimator of

is an estimator of  from

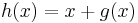

from  , and can be written

, and can be written  , where

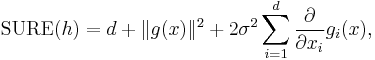

, where  is weakly differentiable. Then, Stein's unbiased risk estimate is given by

is weakly differentiable. Then, Stein's unbiased risk estimate is given by

where  is the

is the  th component of the function

th component of the function  , and

, and  is the Euclidean norm.

is the Euclidean norm.

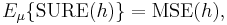

The importance of SURE is that it is an unbiased estimate of the mean-squared error (or squared error risk) of  , i.e.

, i.e.

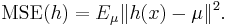

with

Thus, minimizing SURE can act as a surrogate for minimizing the MSE. Note that there is no dependence on the unknown parameter  in the expression for SURE above. Thus, it can be manipulated (e.g., to determine optimal estimation settings) without knowledge of

in the expression for SURE above. Thus, it can be manipulated (e.g., to determine optimal estimation settings) without knowledge of  .

.

Applications

A standard application of SURE is to choose a parametric form for an estimator, and then optimize the values of the parameters to minimize the risk estimate. This technique has been applied in several settings. For example, a variant of the James–Stein estimator can be derived by finding the optimal shrinkage estimator.[2] The technique has also been used by Donoho and Johnstone to determine the optimal shrinkage factor in a wavelet denoising setting.[1]

References

- ^ a b Donoho, David L.; Iain M. Johnstone (December 1995). "Adapting to Unknown Smoothness via Wavelet Shrinkage". Journal of the American Statistical Association (Journal of the American Statistical Association, Vol. 90, No. 432) 90 (432): 1200–1244. doi:10.2307/2291512. JSTOR 2291512.

- ^ a b Stein, Charles M. (November 1981). "Estimation of the Mean of a Multivariate Normal Distribution". The Annals of Statistics 9 (6): 1135–1151. doi:10.1214/aos/1176345632. JSTOR 2240405.